Matrix Calculator

Solve Matrix Algebra: Multiplication, Inverse, and Determinants

Please enter the required details and click Calculate.

Calculation Examples

📋Steps to Calculate

-

Set the dimensions of your matrices (rows x columns).

-

Fill in the numeric elements for each matrix.

-

Select the operation (multiplication, determinant, inverse, transpose) and click "Calculate".

Mistakes to Avoid ⚠️

- Multiplying matrices in the wrong order. Unlike scalar multiplication, AB and BA produce different results and are not interchangeable.

- Attempting to multiply matrices with incompatible dimensions. The number of columns in the first matrix must equal the number of rows in the second.

- Entering values in transposed order by mistake, which produces a structurally correct but numerically wrong result with no error message.

- Attempting to compute a determinant for a non-square matrix. Determinants are defined only for square matrices (n by n).

Practical Applications📊

Solve systems of linear equations in algebra and engineering using matrix inversion or row reduction.

Analyze geometric transformations in computer graphics, including rotation, scaling, and projection.

Optimize multi-variable engineering and economic models where relationships between variables are expressed as matrix equations.

Questions and Answers

What is a matrix calculator and what operations does it support?

A matrix calculator is a linear algebra solver that performs operations on rectangular arrays of numbers called matrices. It supports addition and subtraction (element-wise, for matrices of matching dimensions), multiplication (via the dot-product rule, requiring compatible dimensions), determinant calculation (for square matrices), inverse finding (via Gaussian elimination, when the determinant is non-zero), and transposition (flipping rows and columns). These operations underpin a wide range of applications from solving systems of simultaneous equations to performing 3D coordinate transformations in computer graphics and training neural networks.

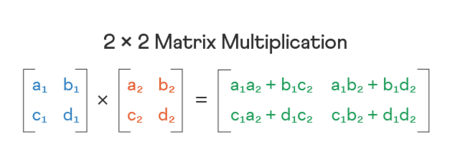

How do you perform matrix multiplication correctly?

Matrix multiplication requires that the number of columns in the first matrix equals the number of rows in the second. The element at position $(i, j)$ in the result is the dot product of row $i$ from the first matrix and column $j$ from the second: $C_{ij} = \sum_{k=1}^{n} A_{ik} \cdot B_{kj}$. For example, multiplying a $2 \times 3$ matrix by a $3 \times 4$ matrix produces a $2 \times 4$ result. A critical property to remember is that matrix multiplication is not commutative: $AB \neq BA$ in general, and reversing the order typically produces a completely different matrix.

What is a matrix determinant and why does it matter?

The determinant is a scalar value computed from a square matrix that encodes fundamental properties of the linear transformation the matrix represents. For a $2 \times 2$ matrix $\begin{pmatrix} a & b \ c & d \end{pmatrix}$, the determinant is $\det(A) = ad - bc$. For larger matrices, it is computed via Laplace cofactor expansion or LU decomposition. A non-zero determinant confirms that the matrix is invertible (non-singular) and that the system of linear equations it represents has a unique solution. A zero determinant means the matrix is singular: no inverse exists, and the corresponding system either has infinitely many solutions or none at all. The absolute value of the determinant also gives the geometric scaling factor of the transformation.

How does the calculator compute results for large matrices?

For small matrices (2x2 and 3x3), the calculator applies direct closed-form formulas. For larger matrices, it uses numerically stable algorithms: LU decomposition to compute determinants efficiently, and Gaussian elimination with partial pivoting to find inverses and solve linear systems. These are the same algorithms used in professional numerical computing libraries such as LAPACK, which underpins MATLAB, NumPy, and R. Using these methods rather than naive cofactor expansion prevents the exponential growth in computation time that makes manual calculation of large determinants impractical.

What is an inverse matrix and when do you need it?

The inverse of a square matrix $A$, denoted $A^{-1}$, is the matrix that satisfies $A \cdot A^{-1} = A^{-1} \cdot A = I$, where $I$ is the identity matrix. An inverse exists only when $\det(A) \neq 0$. Finding the inverse is the matrix equivalent of dividing by a number, and it is used to solve matrix equations of the form $AX = B$ by computing $X = A^{-1}B$. Practical applications include solving systems of simultaneous linear equations in engineering, reversing geometric transformations in computer graphics (such as undoing a rotation or projection), and computing regression coefficients in statistics via the normal equations $(X^TX)^{-1}X^Ty$.

How do I multiply two matrices without manual errors?

The most reliable approach is to work systematically row by row. For each position $(i,j)$ in the result matrix, multiply each element of row $i$ in the first matrix by the corresponding element of column $j$ in the second, then sum all products. For a $3 \times 3$ multiplication, this means computing 9 dot products, each involving 3 multiplications and 2 additions: 45 individual arithmetic operations where a single sign error invalidates the entire result. The calculator automates this process completely, eliminating the cumulative arithmetic errors that make manual multiplication of anything larger than $2 \times 2$ unreliable in practice.

What is the transpose of a matrix and where is it used?

The transpose of a matrix $A$, denoted $A^T$, is formed by reflecting all elements over the main diagonal: the element at position $(i,j)$ in $A$ moves to position $(j,i)$ in $A^T$. This converts all rows into columns and vice versa, so a $3 \times 2$ matrix becomes a $2 \times 3$ matrix after transposition. Transposition is used in identifying symmetric matrices (where $A = A^T$), computing covariance matrices in statistics, adjusting weight matrices in neural network backpropagation, and expressing the normal equations in linear regression. In physics and engineering, the transpose of a rotation matrix equals its inverse, making transposition an efficient way to reverse a rotation transformation.

Disclaimer: This calculator is designed to provide helpful estimates for informational purposes. While we strive for accuracy, financial (or medical) results can vary based on local laws and individual circumstances. We recommend consulting with a professional advisor for critical decisions.